Instructional design theory provides a strong foundation for creating effective learning experiences; however, a gap remains between theory and practice (Wintersberg & Pittich, 2025). This challenge is central to Practitioner-Based Scholarship (PBS), which emphasizes the use of real-world data and reflection to apply theory in context and supports actionable insights that improve practice (Kennedy et al., 2019). Instructional Designers (IDs) are often expected to apply generalized research findings to improve course design, but without concrete knowledge of learner demographics and behaviors, these improvements fail to address the needs of individual learners. To apply best practices meaningfully, we must first understand how our learners engage with learning environments.

At Colorado State University, the Learning Production and Training Team supports online course design with limited access to student survey data. Without these feedback channels, our team asked: How can we better understand our students to make informed, evidence-based design decisions?

This paper shares our team’s response to the theory-to-practice gap: a systematic and replicable approach that draws on two unique projects, usability studies and learning analytics, to build a deeper understanding of learner behaviors. While our institutional barriers were unique, the strategies we used are not. We hope other teams can adapt elements of this PBS approach to address their own data and access challenges and ultimately bridge the theory-to-practice gap in ways that reflect their learners’ real experiences.

We present two case studies: the Usability Project and an Analytics Pilot. Each study highlights key findings, lessons learned through implementation, practical recommendations, and limitations encountered along the way. These methods help contextualize instructional design theory within our institution and enable practical, measurable improvements.

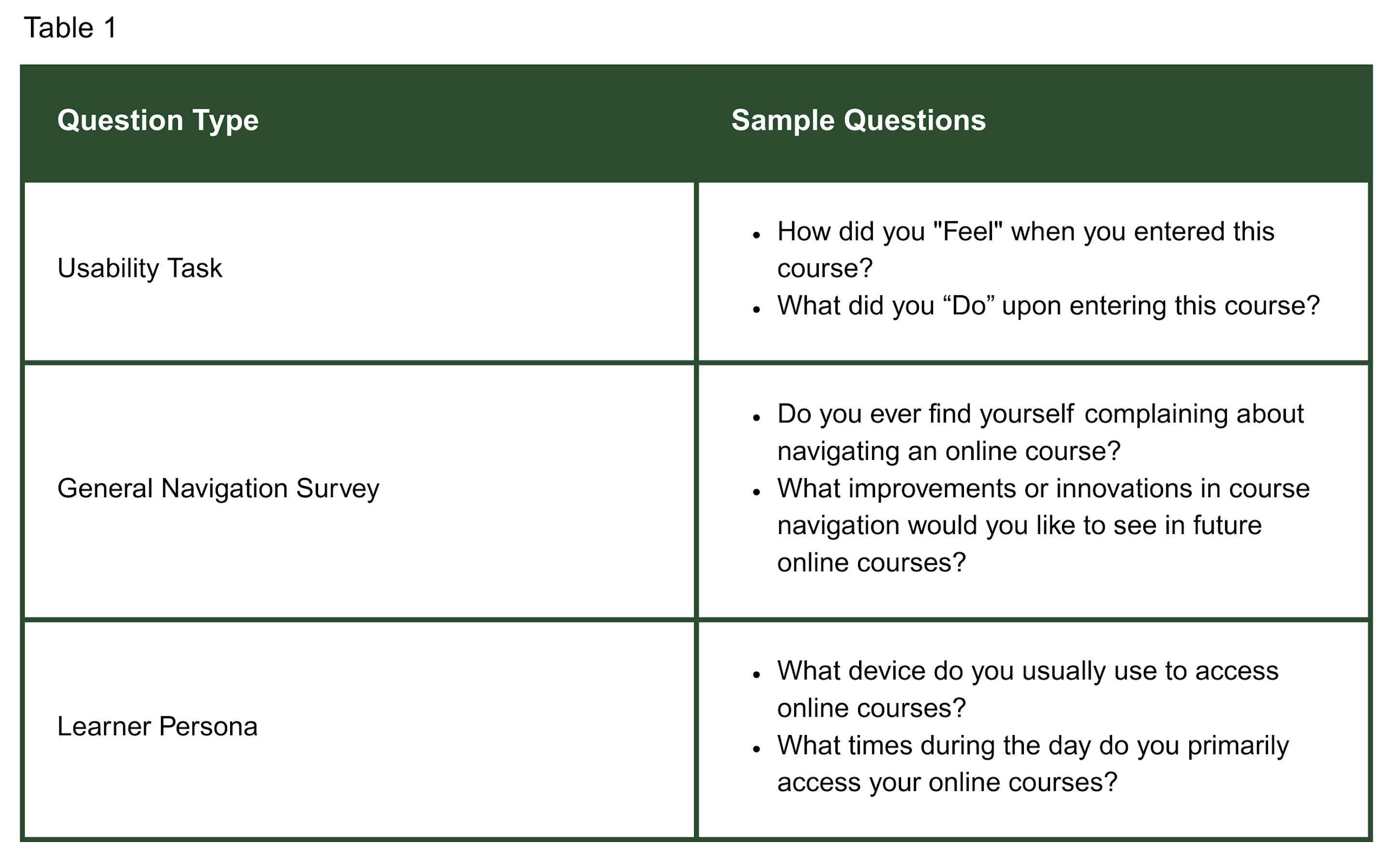

At the start of the Usability Project, we sought IRB approval and determined the project qualified as quality assurance for online course development. Student workers were invited to participate by completing a series of five structured reviews from the question types in Table 1. These reviews were designed to provide targeted feedback for instructional design analysis. We collected data from 15 course navigation surveys across 13 courses from 5 respondents (2024) and 8 respondents (2025). Students screen-recorded while thinking aloud during navigation, providing reactions, behavioral data, and task-oriented feedback. They also completed surveys related to their personas and previous online course navigation experience. Through qualitative analysis, themes from videos and coded transcripts were compiled in alignment with the goals of the project. Finally, the results were compared to 2024 findings, and Julius AI helped provide inferential insights.

In Table 1, there are examples of questions from each survey. Each survey had a distinct approach to data collection, with minimal overlap in question types. Together, they served complementary purposes in understanding the students’ course usability experiences.

Beginning with the usability survey, students consistently preferred clear pathways to the content via the “Next” button or by using the left side navigation. Additionally, instructor contact information was often difficult to locate, and course structure (week-module correlation) caused confusion. Students also noted a lack of assignment due dates and a course schedule, which caused frustration.

In the general surveys for learner personas and navigation experiences, we delineated three primary personas: the career-focused professional, family-balanced scholar, and the traditional digital native. Among these learners, a significant portion used multiple devices, indicating the importance of designing learning environments that accommodate diverse device preferences. Table 2 presents key findings and their future design impact.

As IDs, this feedback helped us ground our design methods in actual student behavior rather than theory-based needs. Our key takeaways centered on amplifying student voice in design. We began shifting development to focus on reducing cognitive load by clearly specifying access to course materials and structured layouts.

The study had limited campus-wide inclusion, gathering feedback primarily from junior-level to graduate-level student workers within our unit. As a result, we missed input from new or first-time online students. Additionally, the courses reviewed were not in live settings, reflecting development ideals rather than actual instructor delivery. While we cannot control live teaching environments, the goal was to optimize course design, recommending that instructors maintain these improvements.

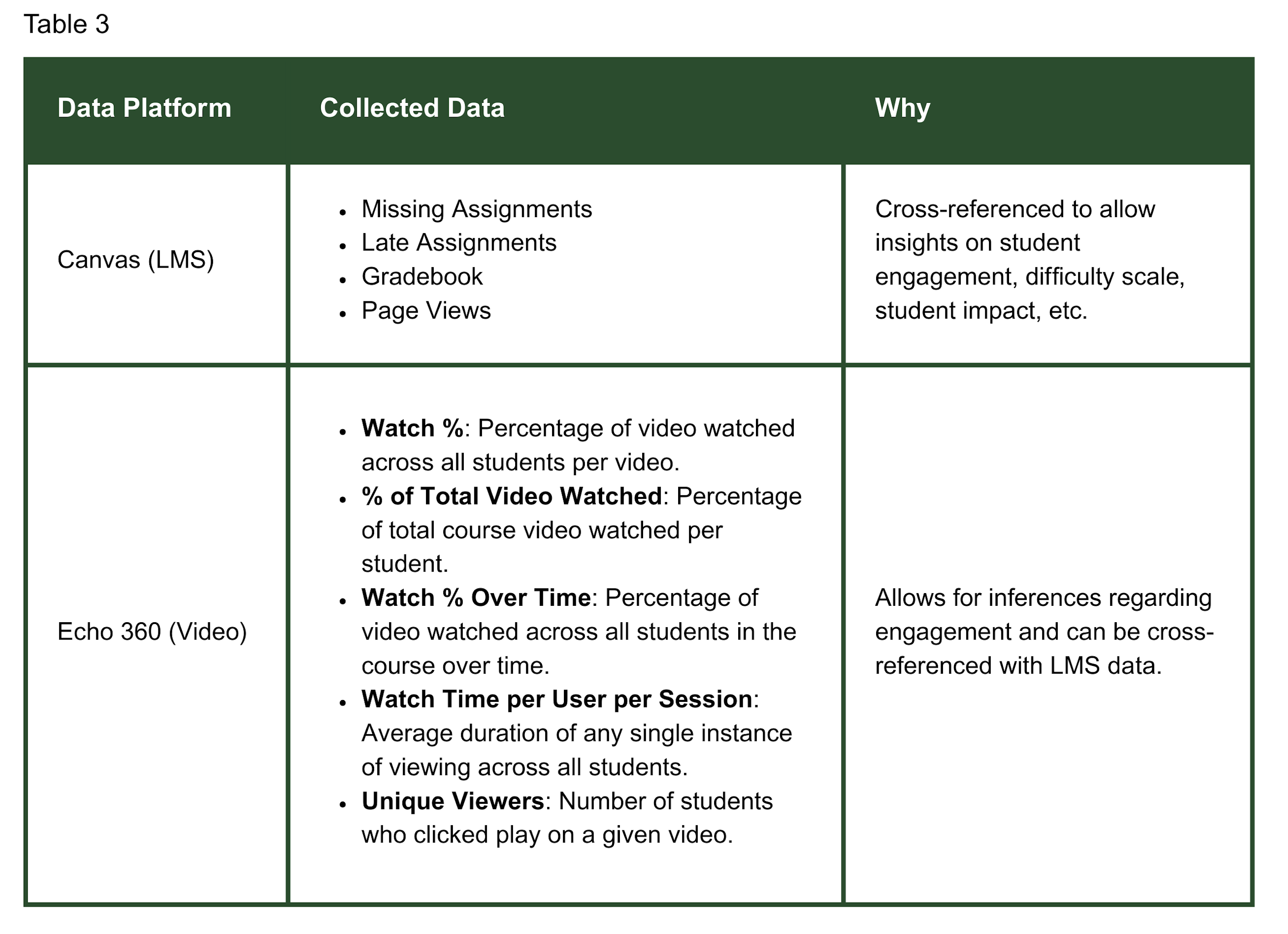

Our goal was to analyze learning analytics, including LMS and video data, from the most recent version of a course undergoing redesign. Once downloaded, the raw data was manually formatted, compiled into a single document, and analyzed by at least two members of our team.

The resulting analysis was presented to the instructor and participating ID, and we collaboratively identified two to three potential design improvements for the subsequent development. After development, we met with the instructor and ID again to gather feedback on the process and suggestions for further iterations. Table 3 defines our data sources that may be replicable at your institution.

Patterns in student engagement, such as limited average video watch time and inconsistency between video usage and grades, highlighted the need to reconsider the content length, format, and delivery. These findings informed targeted course design changes aimed at improving clarity and aligning instructional materials with learner behaviors. Table 4outlines some common findings, the impact they had on course design, and the corresponding recommendations.

One key takeaway concerned data interpretation. Quantitative data rarely implies a single, definitive conclusion. Even with cleaner, more comprehensive data, multiple interpretations are always possible. For example, one instructor’s interpretation of low video watch percentage led to the conclusion that students were cheating, despite the competing explanation that students may have been reading video transcripts instead of pressing play. To support more actionable analysis, we have found it essential to complement the quantitative data with qualitative inputs, such as instructor interviews and user experience.

We also found that during the presentation of the data to instructors, it was important to share positive indications of the data since focusing only on improvements can create a critical environment. Healthy working relationships are essential for any successful development, and that remains true in this context.

We have observed the challenge of scaling this process. While we have considered scalability throughout our pilot, the raw data is complex and not easily interpretable without considerable intervention. Automating parts of this process is essential, but that automation, often built using scripts in Python or R, requires a significant up-front investment.

Our team encountered several challenges when building out a sustainable learning analytics practice. University limitations posed immediate constraints, particularly around data governance and access restrictions. For example, at our faculty-governed institution, access to course survey data is protected, limiting our ability to leverage those insights. As a result, we sought out alternative sources for data that could inform our design decisions.

The iterative nature of this work meant that we were confronting unknown challenges as they arose, which at times derailed timelines and expectations. Testing and iteration cycles, which occurred approximately once every 16 weeks due to our development schedule, required significant time and bandwidth from an already stretched team. We continue to navigate bandwidth constraints and explore how to scale these practices to maximize impact without depleting our resources.

Perhaps most critically, interpreting data analytics introduced the risk of misleading conclusions. Our team explored the ethical use of learning analytics (Center for the Analytics of Learning and Teaching, n.d.) and determined how best to interpret our findings while doing our best to control for bias and faulty analysis. To mitigate these risks, we gathered context from instructors, amplified student voices in our studies, and compared findings across multiple data points before drawing conclusions. This multi-source validation approach helped ensure that our hypotheses accurately reflected the reality of the learner experience rather than a premature conclusion (Samuelsen, Chen, & Wasson, 2019).

One of our most effective approaches involved talking with individuals within and outside our institution to understand their goals and practices and identify replicable strategies (Brown et al., 2022). This consultation-based approach created a foundation for informed decision-making and helped avoid reinventing existing solutions. In this article, we aimed to provide a similar consultation resource that others may replicate within their own institutional contexts.

Moving forward, our team is continuing to let our research questions guide our analytics priorities and approach. We are researching what others have defined as learner success and engagement and determining how we can replicate that in our ecosystem. Video analytics present an immediate opportunity for examining overall engagement, for example, video watch patterns and video density per course, as it correlates to how multimedia impacts learning outcomes. Simultaneously, we are focused on documenting our processes and developing scalable frameworks that can reduce the resource load.

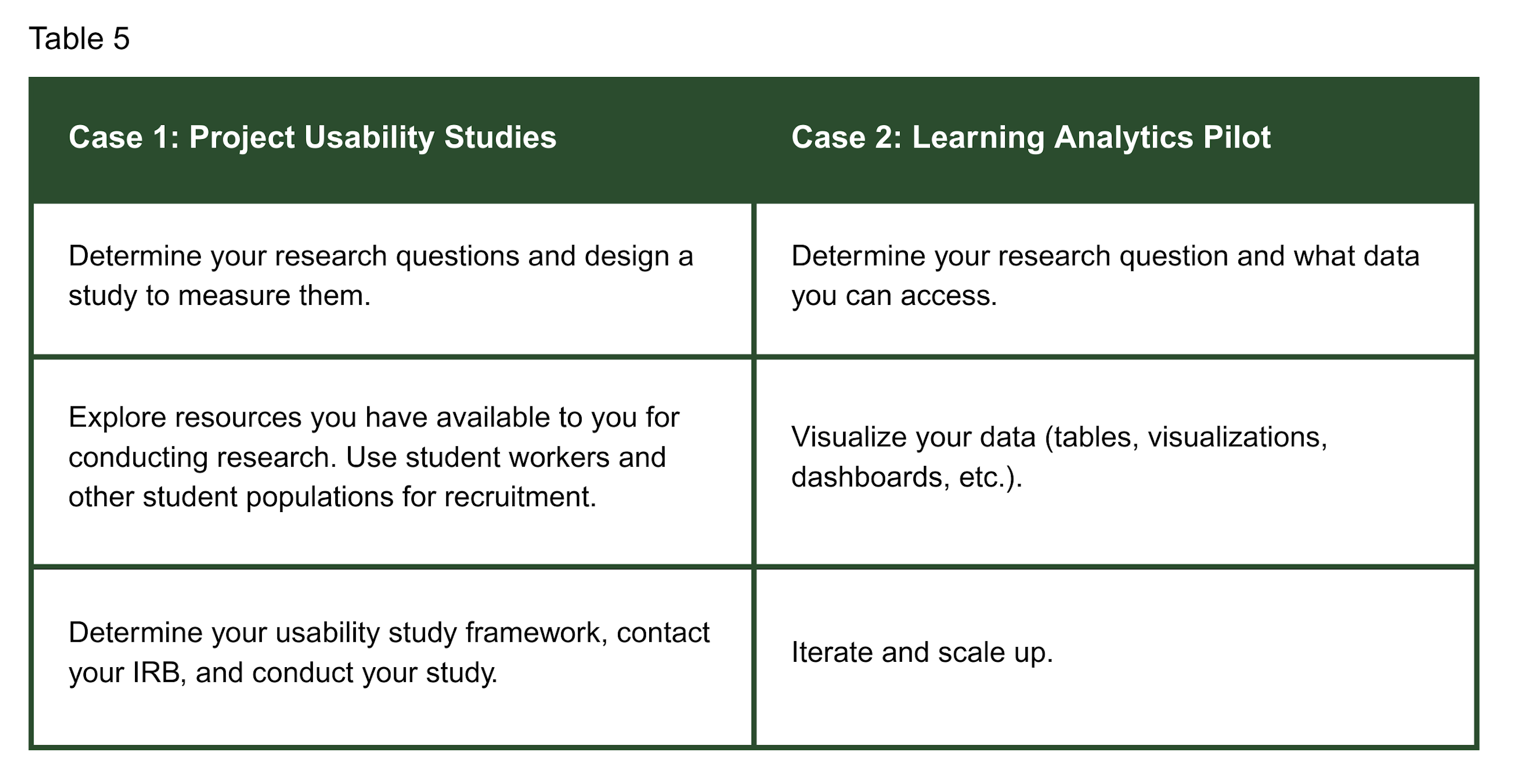

Table 5 details replicable elements from each study that you can incorporate at your institution:

This work exemplifies PBS by contextualizing research within our specific institutional audience. Rather than an abstract analysis that is theoretical in nature, PBS demands actionable insights that directly inform practice. Our team’s work on usability studies and learning analytics has directly informed our instructional design practice, ensuring our findings translate into meaningful interventions that address the real learner experience in our unique university environment.